PowerMark · Aircury × Smartgrade (Powermark)

PowerMark

Executive summary of the case study.

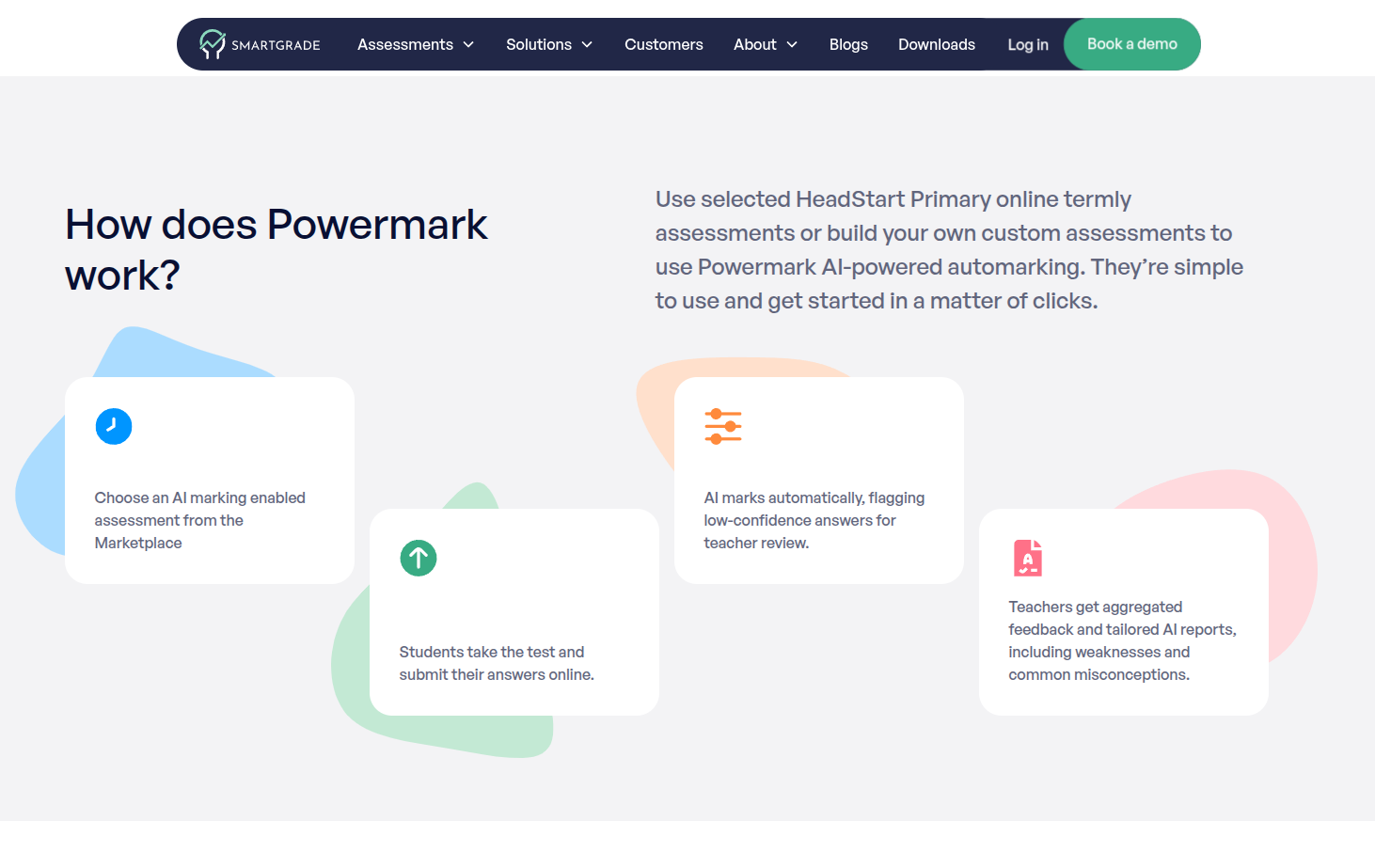

Within Smartgrade, Powermark is their AI auto-marking product: per Smartgrade’s UK marketing site, it targets a sharp drop in marking time (customers report 65–90 % savings vs traditional marking), real-time marking for HeadStart Primary Reading and GPS or custom online papers, typically 94–97 % accuracy, no personally identifiable information sent to AI models, aggregated class and cohort feedback, and teacher moderation and override (low-confidence answers surfaced first). At Aircury I designed and oversaw the PowerMark stack (OCR + NLP) integrated with Smartgrade for handwritten responses and tests—inference, data quality, and rollout with pedagogical teams.

Screenshots — Powermark (Smartgrade UK)

A long-form case study (our own metrics, model evaluation, and classroom operations) is in preparation. Partnerships or a walkthrough: hola@raul-alvarez.es.

FAQ#

Are Powermark and PowerMark the same thing?

Powermark is Smartgrade’s product name for AI auto-marking. PowerMark is Aircury’s OCR+NLP work integrated with that platform.

What accuracy does Smartgrade publish?

They aim for 94%+ where marking guidance is detailed, and cite a large HeadStart Reading and GPS sample (~9,500 responses) at 97% AI accuracy vs 94% for teachers. Accuracy can fall into the 80–90% range when questions or guidance are weaker.

How do they mitigate common AI risks?

They describe per-answer confidence scoring, priority teacher review, random sampling with expert checks, and tight mark schemes; they state student data is not used for model training and PII is not passed to external LLMs.

What was your role on PowerMark?

I owned inference pipeline design and oversight, data quality, and safe rollout with pedagogical stakeholders; the full article will spell out scope and limits.

What about paper assessments?

Smartgrade UK positions scanned paper marking as the next Powermark phase and invites schools/MATs to join a pilot via their sales channel.